The key to kubernetes, have you got it?

Seven keys in the use of kubernetes

Today, the industry no longer questions the value of

containers. Considering the trend of adopting Devops

and Cloud Architecture, it is very important to use container functions to

realize enterprise business automation. Google kubernetes

(k8s), as an open source container arrangement system, has become the de facto

standard for cloud local applications. More and more enterprises are using kubernetes to create a seamless and scalable modern system

architecture composed of micro services and serverless functions.

However, companies investing in container based

applications often struggle to realize the value of kubernetes

and container technology, largely due to operational challenges.In

this article, we will share the seven basic capabilities that large

international companies need to invest in their kubernetes

so that they can effectively implement and leverage it to drive their business.

Hosting kubernetes

service ensures SLA and simplifies operations

While it may be controversial for some, let's start from the most critical

point: for production environments, mission critical applications - don't fall

into kubernetes' DIY trap.

Yes, kubernetes works well, containers are the

future of modern software delivery, and kubernetes is

the best practice for choreographing containers. But as we all know, managing

enterprise workload is very complex, in which SLA is very important.

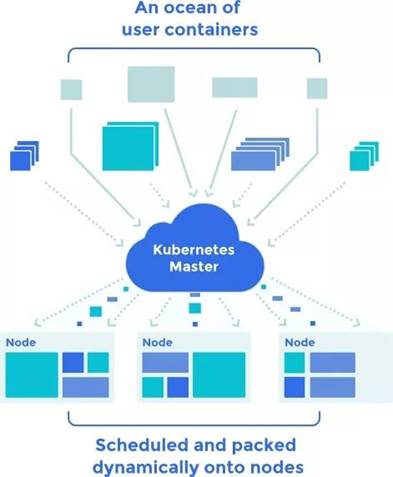

Simply put, kubernetes has control over how to schedule,

deploy, and extend the container groups (pods) that make up an application, and

how they leverage the network and underlying storage. After deployment of kubernetes cluster, it operation

team must determine how to expose these pods to consumer applications through

request routing, and how to ensure their health, ha, zero downtime environment

upgrade, etc. As the cluster grows, it needs to enable developers to go online

in seconds, enable monitoring and troubleshooting, and ensure smooth operation.

We found that the biggest challenge for customers is the operation and

maintenance of kubernetes after installation. After

the first installation, problems arise:

1. Large scale configuration of persistent storage and network

2. Keep up with kubernetes' fast-moving community

version

3. Patch application and underlying kubernetes

versions for security and periodic updates

4. Set up and maintain monitoring and logging

5. disaster recovery

6. Other

The pain of managing the operation and maintenance of production level kubernetes is compounded by the scarcity of talents and

skills across the industry. Nowadays, most organizations are hard to hire

highly sought after kubernetes experts, and they lack

advanced kubernetes experience to ensure smooth

operation on scale.

Hosted kubernetes service basically provides

enterprise level kubernetes without any operational

burden. These services can be provided exclusively by public cloud providers

such as AWS or Google cloud, or they can be solutions that enable organizations

to run kubernetes in their own data centers or hybrid

/ multi cloud environments.

Even with managed services, it is important to note that different types

of solutions will use managed or kubernetes as

a-service to describe very different levels of management experience. Some

services only allow you to deploy the kubernetes

cluster in a simple self-service way, while other services will take over some

ongoing operations of managing the cluster, while other services still provide

fully managed services, which will complete all the heavy work for you, provide

SLA guarantee, and do not require customers to provide any management overhead

or kubernetes expertise.

For example, using third-party hosted kubernetes

as a service, you can start and run enterprise kubernetes

in less than an hour, which can work in any environment. By providing kubernetes as a fully hosted service, the service

eliminates the operational complexity of kubernetes

in scale, including all the enterprise level functions out of the box:

automatic upgrade, multi cluster operation, high availability, monitoring, etc.

- all of which are handled automatically and supported by 24X7X365 SLA.

Kubernetes will be the backbone of your software innovation. Using DIY

method will cause unnecessary troubles to the business. Choosing hosting

service from the beginning will prepare for the success of the enterprise.

Cluster monitoring and logging

Kubernetes's production deployment can usually scale to hundreds of pods,

and the lack of effective monitoring and logging may lead to the failure to

diagnose serious faults that lead to service interruption and affect customer

satisfaction and business.

Monitoring provides visibility and detailed metrics for kubernetes infrastructure. This includes fine-grained

metrics of usage and performance for all cloud providers or local data centers,

regions, servers, networks, storage, and individual VMS or containers. The key

use of these metrics should be to improve data center efficiency and

utilization (which will translate into costs) of local and public cloud

resources.

Logging is a supplementary function for effective monitoring. Logging

ensures that logs are captured at every level of the architecture: from the kubernetes infrastructure, its components, and applications

for analysis, troubleshooting, and diagnostics. Centralized, distributed log

management and visualization is a key feature that can be satisfied using

proprietary or open source tools such as fluent bit, fluent D, elastic search,

and kibana (also known as efk

stack).

Monitoring

should not only be carried out around the clock, but also provide active alerts

for bottlenecks or problems that may arise in production, as well as role-based

dashboards, which contain KPIs on performance, capacity management, etc.

Registry and package management -

helm / Terrain

The private registry server is an important feature because it can store

docker images safely. Registry supports image management workflow, including

image signature, security, LDAP integration, etc. Package managers, such as

helm, provide a template (referred to as a "diagram" in helm) to

define, install, and upgrade kubernetes based

applications.

Once developers have successfully built their code, their ideal approach is

to regenerate the docker image using the registry, which is ultimately deployed

to a set of target pods using the helm diagram.

This simplifies the CI / CD pipeline and publishing process for kubernetes based applications. Developers can more easily

collaborate on their application, version code changes, ensure deployment and

configuration consistency, ensure compliance and security, and roll back to

previous versions when needed. Registry, together with package management,

ensures that the correct image is deployed to the correct container and that

security is integrated into the process.

CI / CD toolchain

for Devops

Enabling the CI/CD pipeline is essential to improve the quality, security,

and release speed of Kubernetes based applications.

Today, continuous integration pipelines (such as unit tests, integration

tests, etc.) and continuous delivery pipelines (such as deployment processes

from development environment to production environment) are usually configured

with gitops methods and tools, and developer workflow

usually starts with "git push" - each code check-in usually triggers

the build, test, and deployment processes. This includes using spinnaker or

other tools for blue-green or Canary auto deployment. More importantly, the kubernetes infrastructure can be easily "plugged"

into these CI / CD tools so that your developers can improve their productivity

and the quality of their releases.

Cluster supply

and load balancing

Production level kubernetes infrastructure

usually needs to create high availability, multi host, multi etcd kubernetes clusters, which

can span the availability zones in private or public cloud environments. The

supply of these clusters usually involves tools such as ansible or terraform.

Once the cluster is set up and pods are created for running applications,

the load balancer is responsible for routing traffic to the service. The load

balancer is not a native feature in the open source kubernetes

project, so you need to integrate with products such as nginx

portal controller, ha proxy or ELB (on AWS VPC) or other tools that extend the

portal plug-ins in kubernetes to provide load

balancing.

security

There is no

doubt that security is a key part of cloud local applications and needs to be

considered and designed from the beginning. Security is a constant throughout

the life cycle of a container, which affects the design, development, Devops practices, and infrastructure choices of container based applications. There are a range of

technology options available to cover a wide range of areas, such as

application level security and the security of containers and infrastructure

itself. These tools include role-based access control, multi factor

authentication (MFA), a & A (authentication and authorization) using OAuth,

openid, SSO and other protocols; different tools

(such as image registry, image signature, packaging), CVE scanning, etc.) that

provide authentication and security for the content inside the container

itself.

Government

Processes

around governance, auditing, and compliance are a sign of kubernetes'

growing maturity, as well as the growing number of kubernetes

based applications in large enterprises and regulated industries. Your kubernetes infrastructure and associated publishing

processes need to be integrated with tools to provide visibility and automatic

audit trails for different task and permission levels of any update to your kubernetes application or infrastructure for correct

compliance enforcement.

All in all,

enabling kubernetes for enterprise, mission critical

applications requires more than just deploying a kubernetes cluster. Using the kubernetes

service hosted on the public cloud will help enterprise users well cover the

above seven key considerations and help enterprises ensure that the kubernetes infrastructure is designed to fit the production

workload.

Silver Lining focuses on providing professional public

cloud architecture consulting design, deployment and implementation services,

cloud operation and maintenance services and cloud billing management services

for enterprise customers. For the pain points and difficulties of deploying

container services on enterprise customer cloud, Silver Lining can better help

enterprises realize rapid and safe deployment, maintenance and deployment of

containers!